Foliage

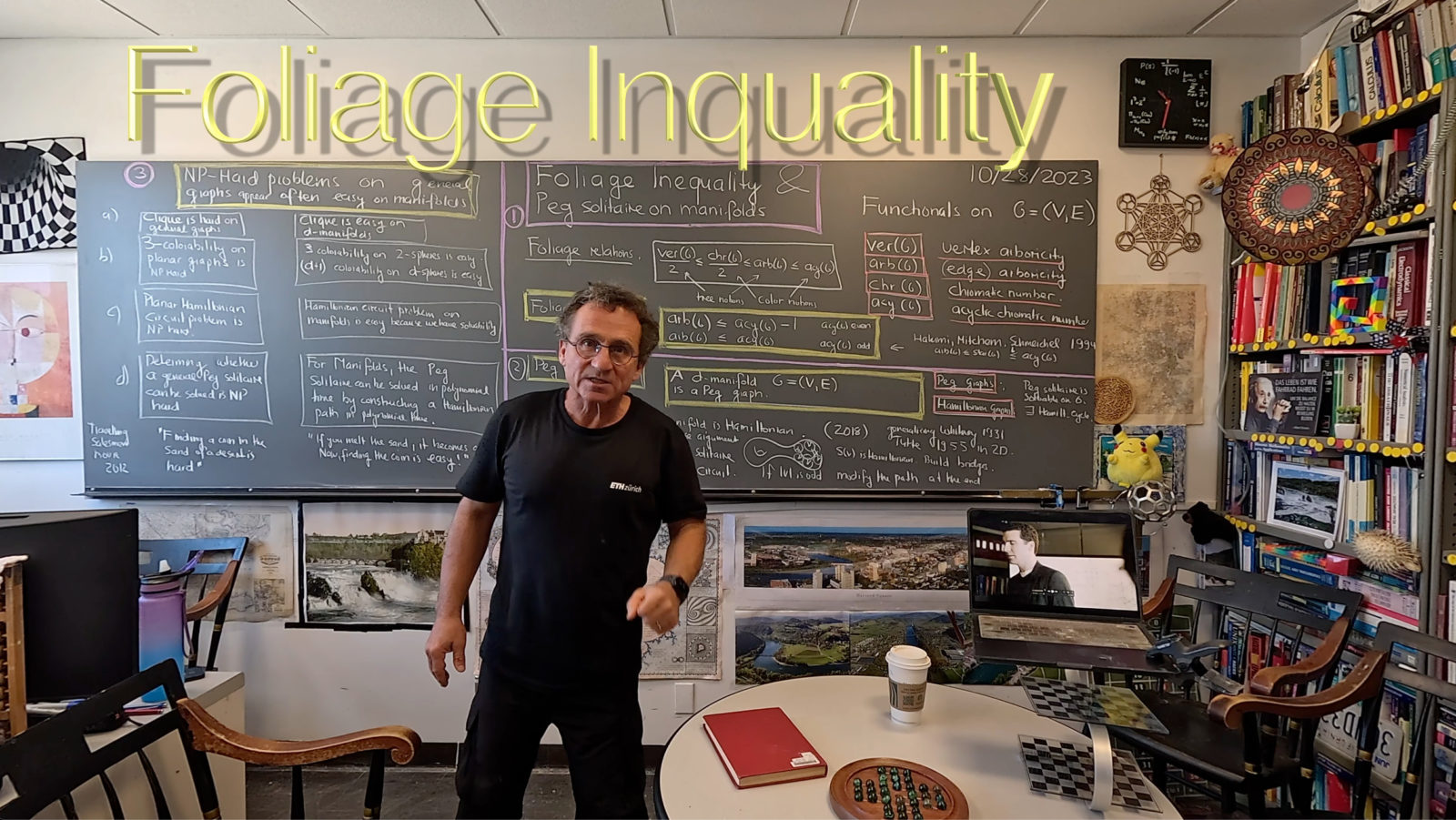

Arboricity and Chromatic number are linked in various ways. Here is a triple inequality, the foliage inequality which is a sandwich inequality linking trees with colors:

ver(G)/2 less or equal than chr(G)/2 less or equal than arb(G) less or equal than acy(G)

where ver(G) is the vertex arboricity, chr(G) the chromatic number, arb(G) the arboricity and acy(G) the acyclic chromatic number. [Sometimes I hate wordpress like refusing to accept standard HTML inequality code or not able to put mathematical formulas fit with a color background]. The three first inequalities follow from the definitions. The first inequality follows from the fact that an independent set is a forest. The second because every forest can be colored by 2 colors. The third one is the only non-obvious statement and follows from a result of Hakimi, Mitchem and Schmeichel stating that the star arboricity is bounded above by the acyclic chromatic number. I gave a new proof slightly improving on the inequality if the acyclic chromatic number is even. This is how I started in that entire business of arboricity; I upgraded the 4-color theorem first to the statement that for aprismatic 2-spheres, the acyclic chromatic number is 4 too which is not obvious but follows if we can recolor a four coloring to have all 6 type of Kempe chains to be forests. Then, I had grouped the 6 Kempe types into three type of forests showing that four forests suffice. It took a few weeks to see this is as a folklore result floating around in various places but in the literature first appearing first by Algor- Alon in 1989. It had been a funny episode because various AI entites (they have read hundreds of thousands of books alone and picked so up a log of knowledge) seem to “know” the result but none was pointing to a valid reference and the “proofs” given were always slightly flawed (but fixable). I then proved more generally that for 2-manifolds different from 2-spheres, the arboricity is always 4 and only then saw that in three or more dimensions, the arboricity can be arbitrary large. I know therefore know now

In any dimension d larger than 2 and any type of manifold and any constant a, there is a manifold so that the acyclic chromatic number is larger than a.

Peg solitaire

We had a wooden Peg Solitaire at home in our living room. It is a nice puzzle. A bit frustrating if one ends up with 2 pegs left which can not be joined. There had been a Math table talk last Wednesday by one of our undergraduates (Daniel Sheremata) who showed some spectacular general results on peg solitaire on graphs. During the talk, I started to think whether such soliaire would be difficult on manifolds. Manifolds have been used for puzzle games since the very beginning. Most of them, like chess, checkers or go are manifolds with boundary. Historically important is the Icosian game by nothing lesser than William Rowan Hamilton (the Hamilton from Hamiltonian, or Hamiltonian graph or quaternions). The game asked to find a Hamiltonian cycle on the dodecahedron which is equivalent to find a Hamiltonian path on the faces of the icosahedron. Peg solitaire is very much related to Hamiltonian paths because if one has a Hamiltonian path in a graph, then one can just restrict the solitaire to this path! This makes the solution very easy. I proved once in 2018 that discrete manifolds are Hamiltonian generalizing a result of Whitney and Tutte (20 years late) who established that in two dimensions. The two dimensional case is actually the most difficult one. In higher dimensions, one has more leverage. The proof is the “Swiss Cheese argument” and reduces the task to one dimension lower: just build first Hamiltonian paths on unit spheres packing the manifold then build detours to include the center and build bridges to join the various paths to one path. Since by definition. I honored my “Swiss connection” by wearing a ETH shirt today.

Peg Solitaire can be solved easily on a d-manifold with an even number of vertices.

Difficult problems

It is well known that WW I was the chemists war, that WW 2, the physicists war and that WW 3 will be the computer science war. Information is the new most dangerous weapon. Paralleled by these prospects are that chemistry (in the form of human chemical intervention with our natural resources), physics (in the form of still even present nuclear arsenals) and computer science (in the presence of AI tools which could obliterate our “purpose of living” and destroy us through nihilism). Humanity is extremely bad in understanding risks; it is very evident now that we overreacted with panic to a in comparison relatively lower scale risk, almost killing our economic resources (definitely killing the resources of many developing countries and so indirectly leading to famine and war) and so obliterate our ability to tackle political and economic necessities for survival like developing new technologies to solve the challenges that await. [The ramifications of the pandemic might still kill us in the near future, not because of the virus but because we bang ourselves the heads in during a new big war. Conflicts happens already in various parts of the word. Not seeing the relations between economic prosperity and fundamental challenges is tragic.] . Physics definitely lost its innocence when creating the atomic bomb. The success of the various Oppenheimer movie features shows that we still are interested in that episode; indeed the dangers of nuclear war have not decreased in the last decades, in contrary. Complexity theory was in vogue in the 1980es, triggered by notions popularized in the 1970ies by computer scientists like Michael Garey and David Johnson (their book gives interesting background on how all these notions were finalized, especially thanks to Donald Knuth who would poll his friends on what are the best words.) I myself did learn these notions only tangentially in logic or computer science courses. More in detail in Specker seminar, where we students would just be thrown into the water by presenting recent research papers. I myself had to present a paper on graph isomorphism (it was one of three talks I gave in the Specker seminars, one on the God number for the Rubik Cube, one about the complexity of graph isomorphism and one about a non-standard analysis approach to Fuerstenberg’s proof of the van Der Wearden theorem.

The Swiss writer Friedrich Duerrenmatt had written in 1961 the play “Die Physiker” which brings up the dilemma which scientists face when developing new knowledge and technology that comes with it. Of similar style is the brilliant stage play “Travelling Salesman (2012)”. I like it if a movie or book bothers getting to some math and not completely trivializes it (or worse, puts wrong parts ). The topic of NP completeness is still “Kitsch” uncultivated taste) and “Klischee” (stereotype) in the sense that it has penetrated everywhere similarly as “chaos theory” or “quantum mechanics” or “black hole theory” or “incompleteness”. In that movie, the authors at least bothered to look up some of the real literature. In this case the book “Computers and Intractability” of Michael R. Garey (1945-) and David S. Johnson (1945-2016) is discussed. The dialogues are pretty good and shows challenges which humanity would have to cope in the case that a group of mathematics would actually reduce NP problems to P problems using a “non-deterministic processor”. The authors of the movie could not resist referring to Oppenheimer or Goedel (again Kitsch in the sense of objects that appeal to popular taste) [I have to add the side remark that I love any kind of mathematical Kitsch, a subject has to earn it! A mathematical topic that does not make it into Kitsch is usually just also not so interesting]. The movie of 2012 mentions in the introduction (as a text preamble) a letter of Kurt Goedel to to John von Neumann of March 20, 1956 sent from Princeton (von Neumann died less than a year later in February 1958) Here it is in text form (I replaced just all non-ASCII symbols from this source [PDF]).

Dear Mr. von Neumann, With the greatest sorrow I have learned of your illness. The news came to me as quite unexpected. Morgenstern already last summer told me of about of weakness you once had, but at that time he thought that this was not of any greater significance. As I hear, in the last months you have undergone a radical treatment and I am happy that this treatment was successful as desired, and that you are now doing better. I hope and wish for you that your condition will soon improve even more and that the newest medical discoveries, if possible, will lead to a complete recovery. Since you now, as I hear, are feeling stronger, I would like to allow myself to write you about a mathematical problem, of which your opinion would very much interest me: One can obviously easily construct a Turing machine, which for every formula F in first order predicate logic and every natural number n, allows one to decide if there is a proof of F of length n (length = number of symbols). Let f(F,n) be the number of steps the machine requires for this and let f(n) = max F f(F,n). The question is how fast f(n) grows for an optimal machine. One can show that f(n) larger or equal than k · n. If there really were a machine with f(n) smaller or equal than k · n (or even less or equal than k·n2), this would have consequences of the greatest importance. Namely, it would obviously mean that in spite of the undecidability of the Entscheidungsproblem, the mental work of a mathematician concerning Yes-or-No questions could be completely replaced by a machine. After all, one would simply have to choose the natural number n so large that when the machine does not deliver a result, it makes no sense to think more about the problem. Now it seems to me, however, to be completely within the realm of possibility that f(n) grows that slowly. Since it seems that f(n) larger or equal than k · n is the only estimation which one can obtain by a generalization of the proof of the undecidability of the Entscheidungsproblem and after all f(n) ~ k . n (or ~ k . n2) only means that the number of steps as opposed to trial and error can be reduced from N to log N (or (log N)2). However, such strong reductions appear in other finite problems, for example in the computation of the quadratic residue symbol using repeated application of the law of reciprocity. It would be interesting to know, for instance, the situation concerning the determination of primality of a number and how strongly in general the number of steps in finite combinatorial problems can be reduced with respect to simple exhaustive search. I do not know if you have heard that “Post’s problem”, whether there are degrees of unsolvability among problems of the form exists y such that G(y,x), where G is recursive, has been solved in the positive sense by a very young man by the name of Richard Friedberg. The solution is very elegant. Unfortunately, Friedberg does not intend to study mathematics, but rather medicine (apparently under the influence of his father). By the way, what do you think of the attempts to build the foundations of analysis on ramified type theory, which have recently gained momentum? You are probably aware that Paul Lorenzen has pushed ahead with this approach to the theory of Lebesgue measure. However, I believe that in important parts of analysis non-eliminable impredicative proof methods do appear. I would be very happy to hear something from you personally. Please let me know if there is something that I can do for you. With my best greetings and wishes, as well to your wife, Sincerely yours, Kurt Goedel. P.S. I heartily congratulate you on the award that the American government has given to you.